about me

Han Fang is an AI Research Scientist at Meta’s Superintelligence Labs, working at the frontier of Self Improvement & Agents. He founded Meta AI’s production post-training team and led production post-training for Llama 2 and Llama 3. He launched Meta AI in 2023 and scaled it to 1 billion MAU — driving integrated training runs, core capabilities, tool use, and data flywheel. Most recently, he is a core contributor to Agents in Muse Spark, driving agentic tool use to SoTA on MCP-Atlas.

Han holds a PhD in Applied Mathematics & Machine Learning, published in top-tier venues with 11K+ citations. He is a recipient of the President’s Award to Distinguished Doctoral Students, the Woo-Jong Kim Dissertation Award, and the Excellence in Research Award.

Google Scholar / CV / Linkedin / Twitter

blog

The Central Dogma of Artificial Intelligence

February 2026

Every mature science has its central dogma. Biology has DNA → RNA → Protein. What is ours? Intelligence is the compression of experience into generalization.

The RL Environment Field Guide

January 2026

A practical guide to RL environments using Pokemon Red as a case study. Covers the agent-environment loop, observation spaces, reward design, and credit assignment.

Post-training 101: A Hitchhiker's Guide

September 2025

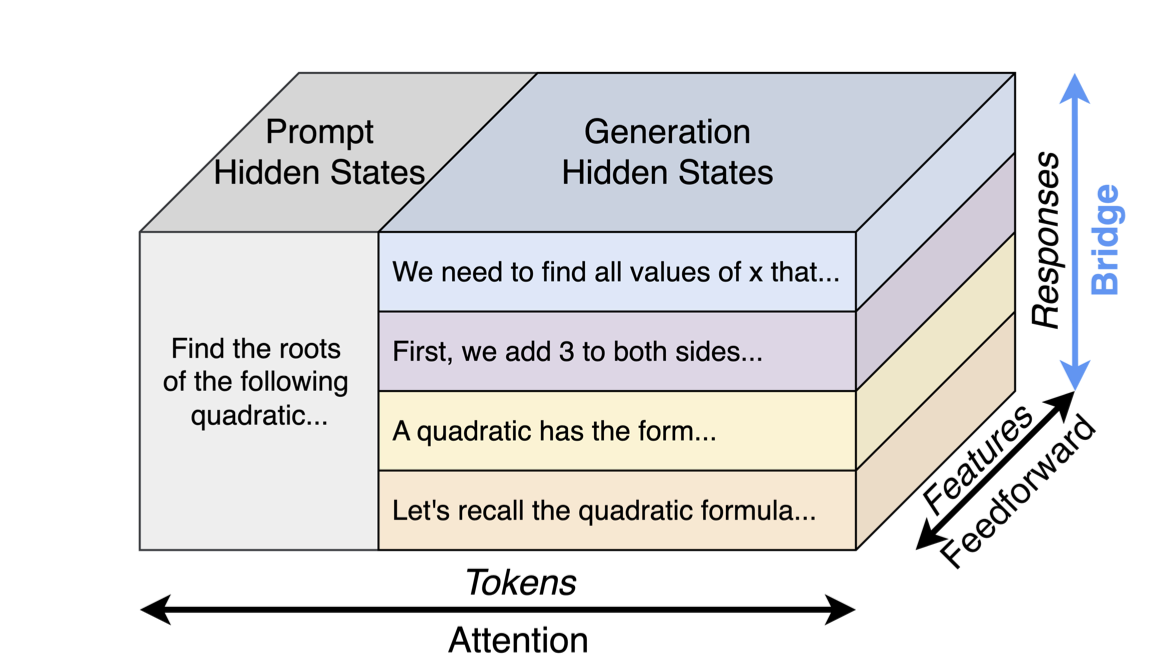

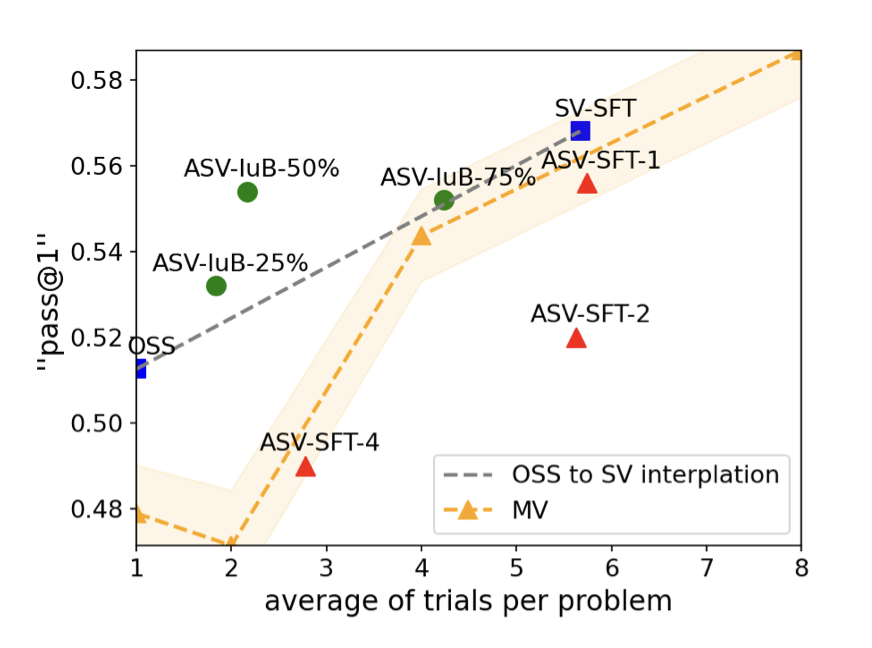

A comprehensive guide to post-training techniques for LLMs, covering supervised fine-tuning, RLHF, reward models, and practical implementation details.

news

Autodata: Automatic Data Scientist

AI agents that function as data scientists, iteratively building high-quality training and evaluation datasets. Agentic Self-Instruct converts inference compute into better data. Blog

2026

Launched Agentic Tool Use in Muse Spark

Excited to introduce Muse Spark 🥑, the first in the Muse family of models from Meta Superintelligence Labs. Natively multimodal reasoning with tool-use, visual chain of thought, and multi-agent orchestration. Drove agentic tool use capabilities to SoTA.

2026

Meta AI reached 1 billion MAU

Improved Meta AI's multilinguality, enabled roll-out to 12 languages and 40+ countries. Blog · News

2025

Launched voice mode and photo editing in Meta AI

Launched updated Llama 3 model for voice mode. Improved Planner for photo editing with multimodal inputs. Blog

Connect 2024

Launched Llama 3 on Meta AI

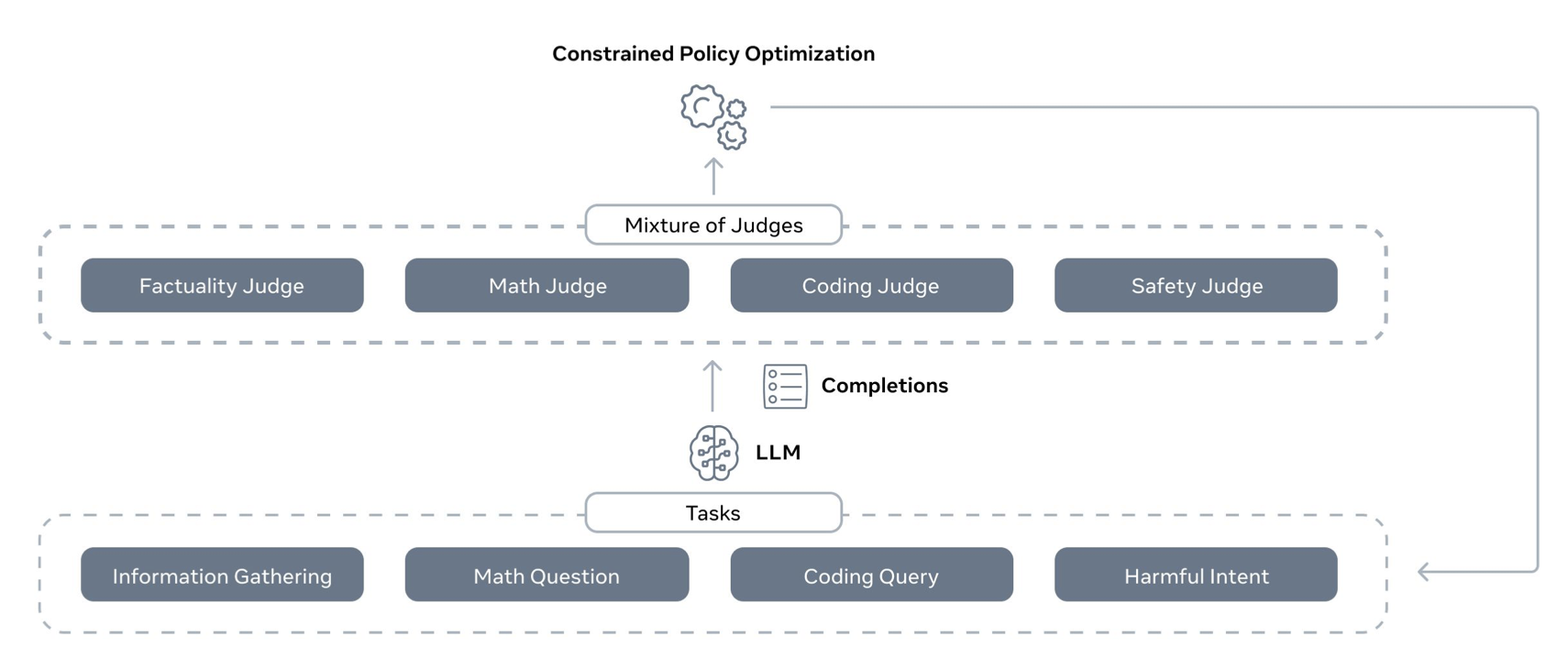

Developed Meta AI's online RL with Mixture of Judges, improving reasoning, instructions following, safety. Blog

2024

Launched Meta AI with Llama 2

Debut Meta AI into Family of Apps. Created Orchestrator for tool use. My talk at Connect

2023

Meta AI Few-Shot Learner (FSL)

Developed FSL for detecting new forms of harmful content across 100+ languages. Blog

2021

Training AI to detect hate speech

Built RL-based framework to E2E optimize hate speech classifiers. Blog

2020